Skepdick wrote: ↑Fri Aug 23, 2019 7:40 amPTH wrote: ↑Thu Aug 22, 2019 2:48 pmIf it's a fake, it matters. If it was conscious, it could look silent and be laughing on the inside. And we'd be none the wiser.

And yet, we almost agreed that if we can't tell the difference between a machine and a human then it is conscious.

Almost, but if we put it like that we'd still be disagreeing.

It's not the absence of difference that works for me. It's the demonstration of how we eliminated the difference. If what's in the black box is a Chinese Room, we won't have consciousness.

Skepdick wrote: ↑Fri Aug 23, 2019 7:40 amPTH wrote: ↑Thu Aug 22, 2019 2:48 pmWhy does that matter? Because I know I have all kinds of mental stuff going on inside. How I know if anyone else does? I think that's one of those pointless doubts, best handled with the

remark by Bertrand RussellI once received a letter from an eminent logician, Mrs. Christine Ladd-Franklin, saying that she was a solipsist, and was surprised that there were no others.

Then is it not a pointless doubt to doubt whether an artificial consciousness is "really" laughing on the inside?

Is it not a pointless doubt to wonder if "others are like you on the inside?

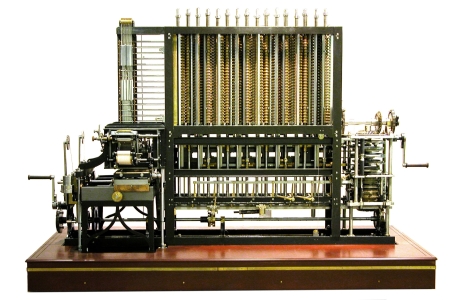

I think its fine to avoid pointless doubts about the consciousness of other people, while at the same time not ascribing consciousness to things. Particularly as we do know a difference engine isn't laughing on the inside, as we can fully explain it's laughter from the gears we engaged. If we stop turning the handle, it stops laughing.

And it was never really laughing at all, at any time.

Again, recall the practical situation. We don't have Nexus 6 Replicants making an eloquent case for civil rights. The only point in thinking about imaginary machines that might look conscious is if it tells us something useful about how to better understand features of our own consciousness. Like laughing at a joke we understand.

And, just to be clear, this is all in a context where we don't yet have really good understanding of consciousness. So I'm not at all saying "this fails to conform to the Universally Accepted Canon of Consciousness", because there isn't one. I'm really just saying a narrative that pulls up at the border of all our internal mental stuff, and says "I'm not getting lost in that morass", won't give a satisfying explanation.

(For no particular reason, I've a vague memory of a Peter Cook gag about the research agenda of the Munich Institute of Ha-Hamakin "It's funny if a man slips on a banana skin. But if a man slips on two banana skins, we are studying is that twice as funny or half as funny?")

Skepdick wrote: ↑Fri Aug 23, 2019 7:40 amSurely, a trivial part of a Turing test would be to have a conversation about art, emotions, feelings, empathy, love, compassion, poetry, creativity and all those qualitative properties we, humans, cherish? And if the machine is able to reciprocate and engage the conversation to the point where it fools you. Is it not a pointless doubt to doubt whether it "really has mental stuff going on inside"?

No, because I could read someone's book about art and such, and be struck by how well the book made it all seem to express the human qualities involved and at how each chapter seems to anticipate where the previous chapter left me. But the book isn't conscious.

The machines we currently have are just really good at producing relevant information.

Skepdick wrote: ↑Fri Aug 23, 2019 7:40 amAlas, if the machine were to become so sophisticated - I doubt they could cherish the same things a human cherishes, so are we still talking about inventing a mechanical consciousness here, or are we talking about inventing a mechanical human?

Are we talking about the sufficiency for consciousness, or the necessity for humanness?

Oh, absolutely, I expect a conscious entity of some other type would have different interests. But isn't a lot of it the internal life? I expect a cat is conscious, just not smart enough to talk.